HoT: Highlighted Chain of Thought for Referencing Supporting Facts from Inputs

Tin Nguyen*, Logan Bolton*, Mohammad Reza Taesiri, Anh Totti Nguyen

2025

Links: pdf | code | project page

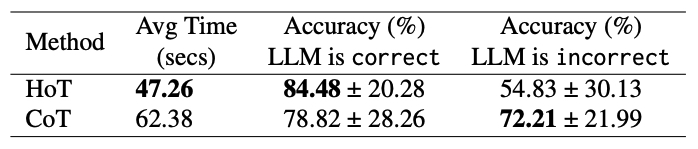

An Achilles heel of Large Language Models (LLMs) is their tendency to hallucinate nonfactual statements. A response mixed of factual and non-factual statements poses a challenge for humans to verify and accurately base their decisions on. To combat this problem, we propose Highlighted Chain-of-Thought Prompting (HoT), a technique for prompting LLMs to generate responses with XML tags that ground facts to those provided in the question. That is, given an input question, LLMs would first re-format the question to add XML tags highlighting key facts, and then, generate a response with highlights over the facts referenced from the input. Compared to vanilla chain of thought prompting (CoT), HoT reduces the rate of hallucination and separately improves LLM accuracy consistently on over 20 tasks from arithmetic to reading comprehension, and logical reasoning. When asking humans to verify LLM responses, highlights help time-limited participants to more accurately and efficiently recognize when LLMs are correct. Yet, surprisingly, when LLMs are wrong, HoTs tend to fool users into believing that an answer is correct.

Acknowledgment: This work is supported by the National Science Foundation under Grant No. 2145767, Adobe Research, and the NaphCare Charitable Foundation.

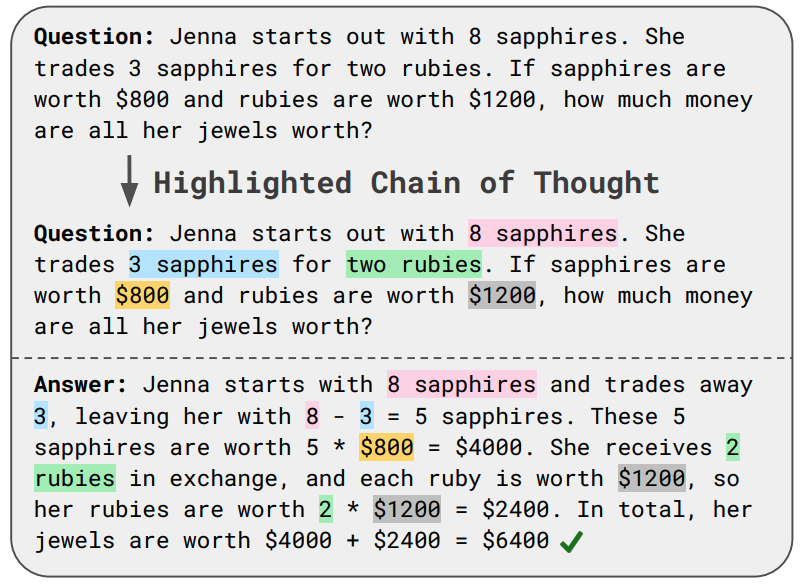

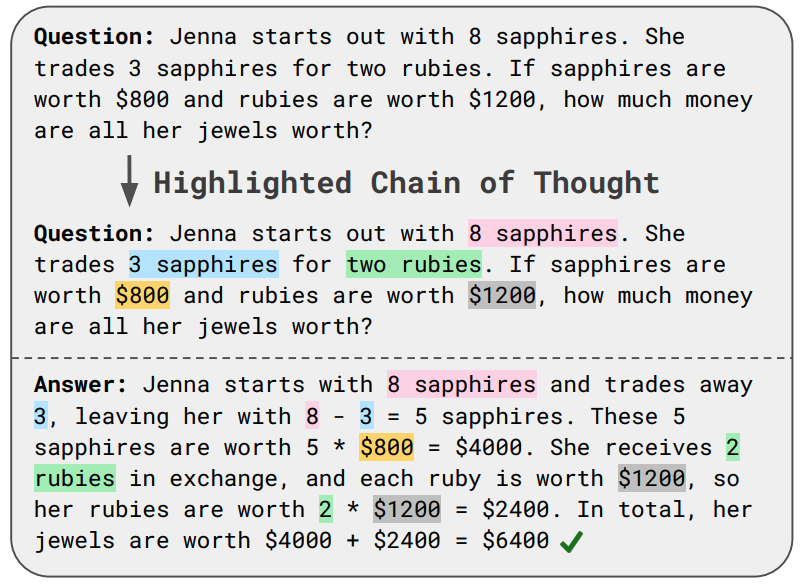

Figure 1: An highlighted chain of thought, composed of a re-formatted question and answer, generated by Gemini–1.5–Pro in response to a GSM8K question.

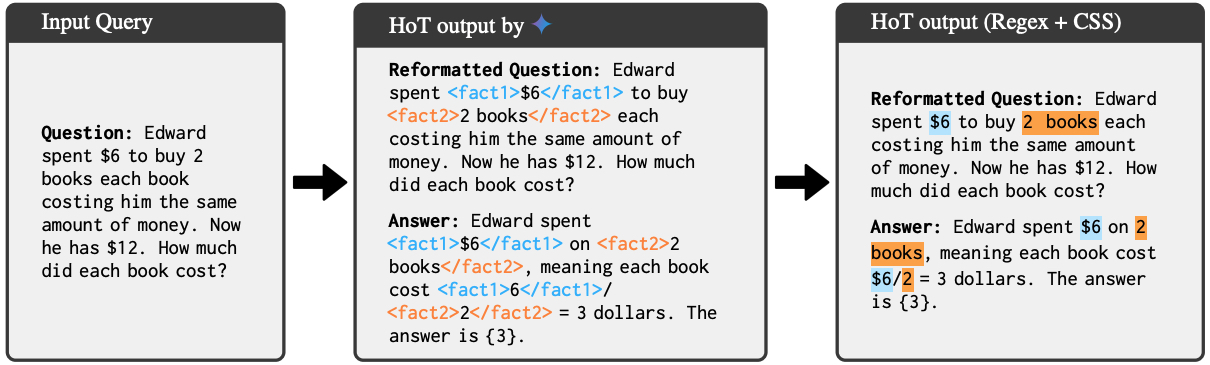

Figure 2: LLMs generate HoT responses by wrapping XML tags around the information that the model determines is the most important. Regex and CSS are then used to visualize the highlights for user readability.

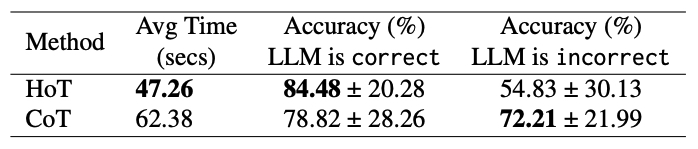

Table 2: Users spend ∼25% less time when verifying chains of thoughts with highlights (compared to without highlights). Highlights tend to cause users to accept LLM answers more, resulting in improved accuracy in correct cases and worse accuracy in incorrect ones.